I finally had some time over the holidays to complete the first panel of the TorchStation. The core idea is to have a monitor box that sits on your desk and tracks distributed model training. The panel shown below is a prototype for displaying GPU usage and memory. I’ll continue to post updates as I add more components. The main challenge with this board was power: the LED bars alone drew around 1.2A (when all full brightness and all lit up), so I had to use an external power supply and do a common ground with the MCU, for the panel I used a PLA Matte and 3mm. Wiring was the worst, this panel alone required at least 32 wires, but the panel will hide it quite well. I’m planning to support up to 8 GPUs per node, which aligns with the typical maximum found in many cloud instances. Here is the video, which was quite tricky to capture because of the camera metering of exposure that kept changing due to the LEDs (the video doesn’t do justice to how cool these LEDs are, they are very bright and clear even in daylight):

I’m using for the interface the Arduino Mega (which uses the ATmega2560) and Grove ports to make it easier to connect all of this, but I had to remove all VCCs from the ports to be able to supply from an external power supply, in the end it looks like this below:

┌────────── PC USB (5V, ≤500 mA)

│

+5 V │

▼

┌─────────────────┐

│ Arduino Mega │ Data pins D2…D13, D66…D71 → LED bars

└─────────────────┘

▲ GND (common reference)

│

┌────────────┴──────────────┐

│ 5V, ≥3 A switching PSU │ ← external PSU

└───────┬───────────┬───────┘

│ │

│ +5V │ GND

▼ ▼

┌─────────────────────────────────┐

│ Grove Ports (VCC rail) │ <– external 5V injected here

│ 8 × LED Bar cables │

└─────────────────────────────────┘

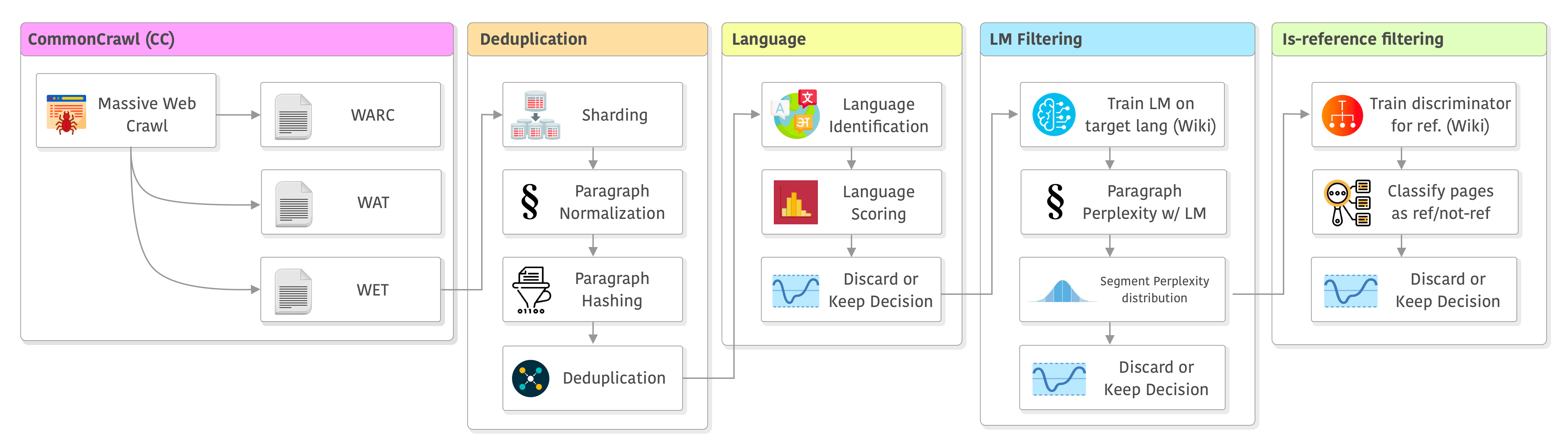

Just sharing ~100 slides about PyTorch 2 internals focusing on recent innovations (Dynamo, Inductor, and ExecuTorch). I had a lot of fun preparing this and hope you’ll enjoy it. I’m planning to record it soon.

Just sharing ~100 slides about PyTorch 2 internals focusing on recent innovations (Dynamo, Inductor, and ExecuTorch). I had a lot of fun preparing this and hope you’ll enjoy it. I’m planning to record it soon.